DragFT: Adapting Large Language Models with Dictionary and Retrieval Augmented Fine-tuning for Domain-specific Machine Translation

- 数据简介:

作者:Yangxin Jiang, Jiawei Zheng, Hanghai Hong, Xiaoli Wang, Chang Liu, Yonggui Liang, Shikai Wu

英文摘要:

Large Language Models (LLMs) have shown strong potential for domain-specific machine translation (MT). However, enterprise texts such as internal knowledge bases are often characterized by dense domain terminology, internal codes (e.g., abbreviations, identifiers, and project codenames), limited context, and non-standard expressions, which make translation constraints difficult to satisfy consistently.

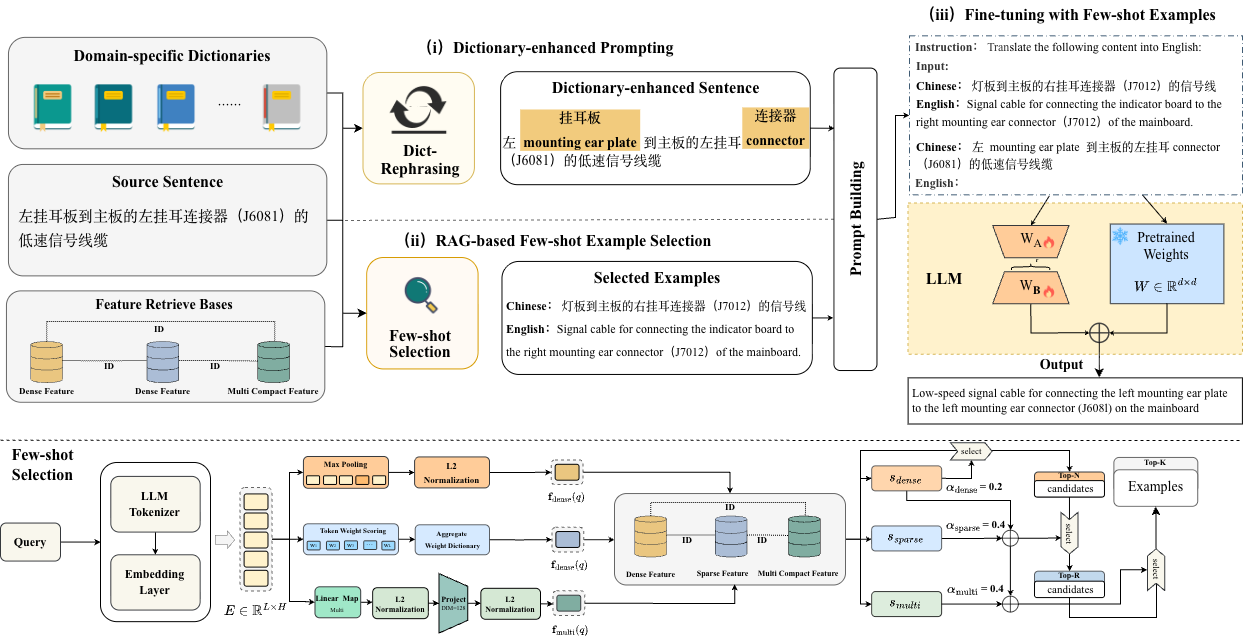

To address this issue, we propose \textbf{DragFT}, a domain adaptation framework for MT that combines three complementary techniques: (i) \textbf{dictionary-augmented prompting}, which injects domain dictionaries into prompts to improve terminological consistency; (ii) \textbf{RAG-based few-shot example selection}, which retrieves domain- and style-aligned high-quality demonstrations using LLM embeddings; and (iii) \textbf{few-shot instruction tuning}, which improves instruction following under limited labeled data. We also present \textbf{DragFT-IT}, a curated IT-domain translation dataset featuring terminology- and code-heavy inputs.

Experiments on three LLM backbones (Qwen3, GLM4, and Llama3.1) show that DragFT consistently improves translation quality and terminology consistency, achieving performance competitive with or superior to strong baselines, including DeepSeek-V3.2, GPT-5.2, and Gemini-3. Further analyses indicate that these gains primarily come from more effective domain knowledge injection and reduced noise in prompting, retrieval, and tuning.

中文摘要:

大语言模型(LLMs)在领域特定机器翻译(MT)中展现出较强潜力,但企业文本如内部知识库通常具有领域术语密集、内部代码频繁出现(如缩略语、标识符和项目代号)、上下文有限以及表达不规范等特点,导致翻译约束难以被稳定满足。为解决这一问题,本文提出了面向机器翻译的领域适应框架 DragFT,该框架结合了三种互补技术:一是词典增强提示,通过在提示中注入领域词典提升术语一致性;二是基于 RAG 的少样本示例选择,利用 LLM 嵌入检索在领域和风格上对齐的高质量示例;三是少样本指令微调,在标注数据有限的条件下增强模型的指令遵循能力。此外,本文还构建了 DragFT-IT,这是一个面向 IT 领域的翻译数据集,其输入文本具有术语密集和代码密集的特征。在 Qwen3、GLM4 和 Llama3.1 三种 LLM 骨干模型上的实验结果表明,DragFT 能够稳定提升翻译质量和术语一致性,其性能与 DeepSeek-V3.2、GPT-5.2 和 Gemini-3 等强基线相比具有竞争力,甚至更优。进一步分析表明,这些性能提升主要源于更有效的领域知识注入,以及在提示、检索和微调过程中噪声的减少。

图1 DragFT框架图

DragFT-IT 数据集:如需获取数据集,请联系 jiangyangxin@stu.xmu.edu.cn。

DragFT 代码:即将开源(coming soon)。